How to Measure Your Brand Visibility in ChatGPT, Perplexity, Gemini & Claude (2026 Guide)

Your brand is either showing up in AI answers — or it’s not. And right now, most businesses have no idea which one it is.

The queries people are typing into ChatGPT, Perplexity, and Gemini are the same queries they used to type into Google. The difference? There are no “ten blue links” to scroll through. The AI picks one, maybe two brands to mention — and everyone else is invisible.

This guide covers exactly how to measure, track, and improve your brand’s AI visibility in 2026.

Why Can’t Traditional Analytics Measure AI Visibility?

Google Analytics doesn’t track this. Your SEO platform doesn’t track this. Your social listening tool definitely doesn’t track this.

When someone asks ChatGPT “What is the best project management tool for remote teams?” — and your product doesn’t appear — there is no click, no impression, no bounce rate to analyze. The opportunity just evaporates silently.

That is the core challenge of AI visibility measurement: if your brand isn’t mentioned, nothing gets logged — no click, no impression, no bounce rate. The opportunity just disappears without a trace.

What Are the 5 AI Visibility Metrics That Actually Matter?

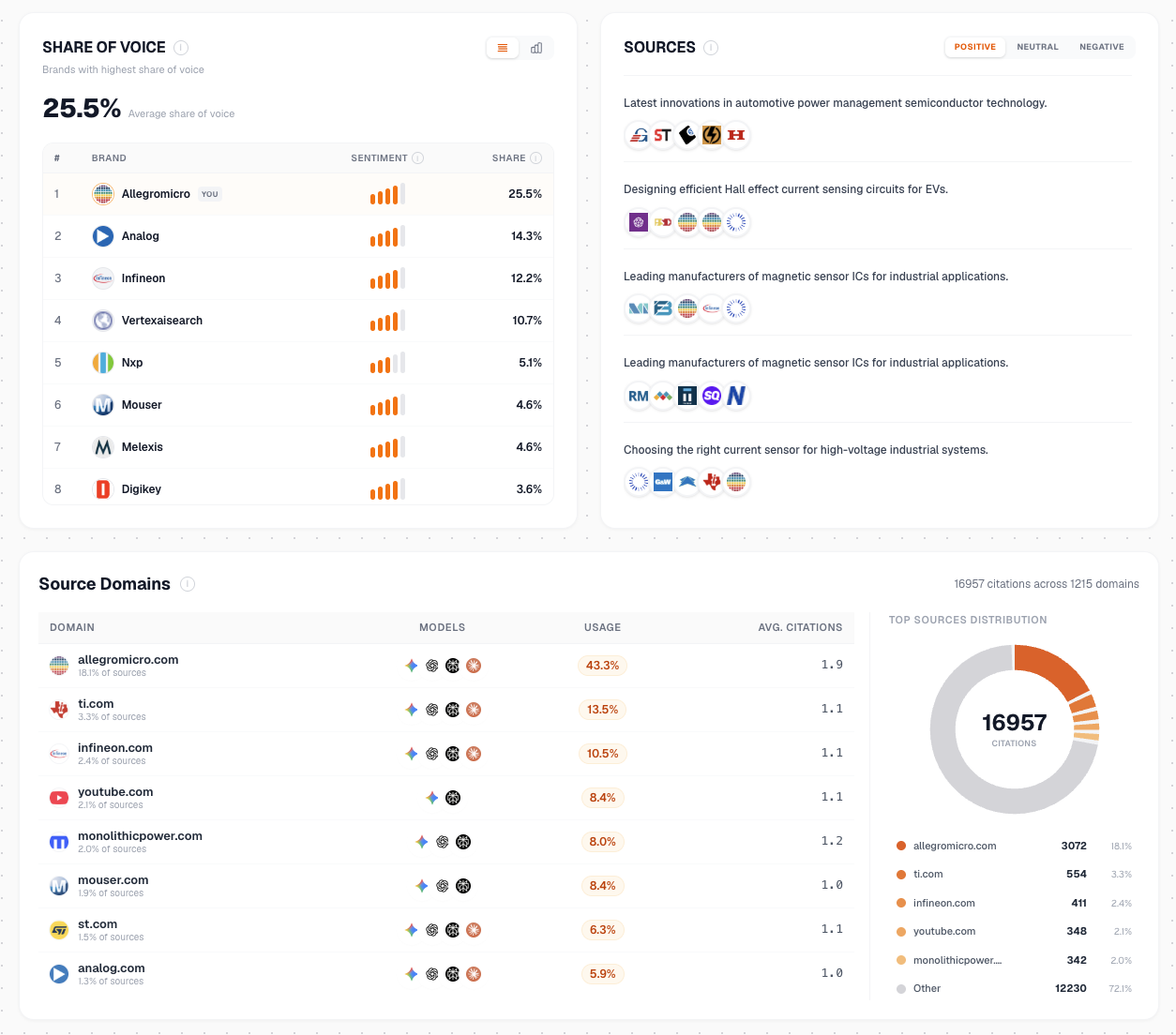

The five core AI visibility metrics are: visibility rate (how often your brand appears), rank position (where you appear in the response), sentiment score (how the AI describes you), citation sources (where the AI pulls data from), and share of voice (your mention frequency vs. competitors). These replace traditional SEO metrics entirely — here’s how each one works.

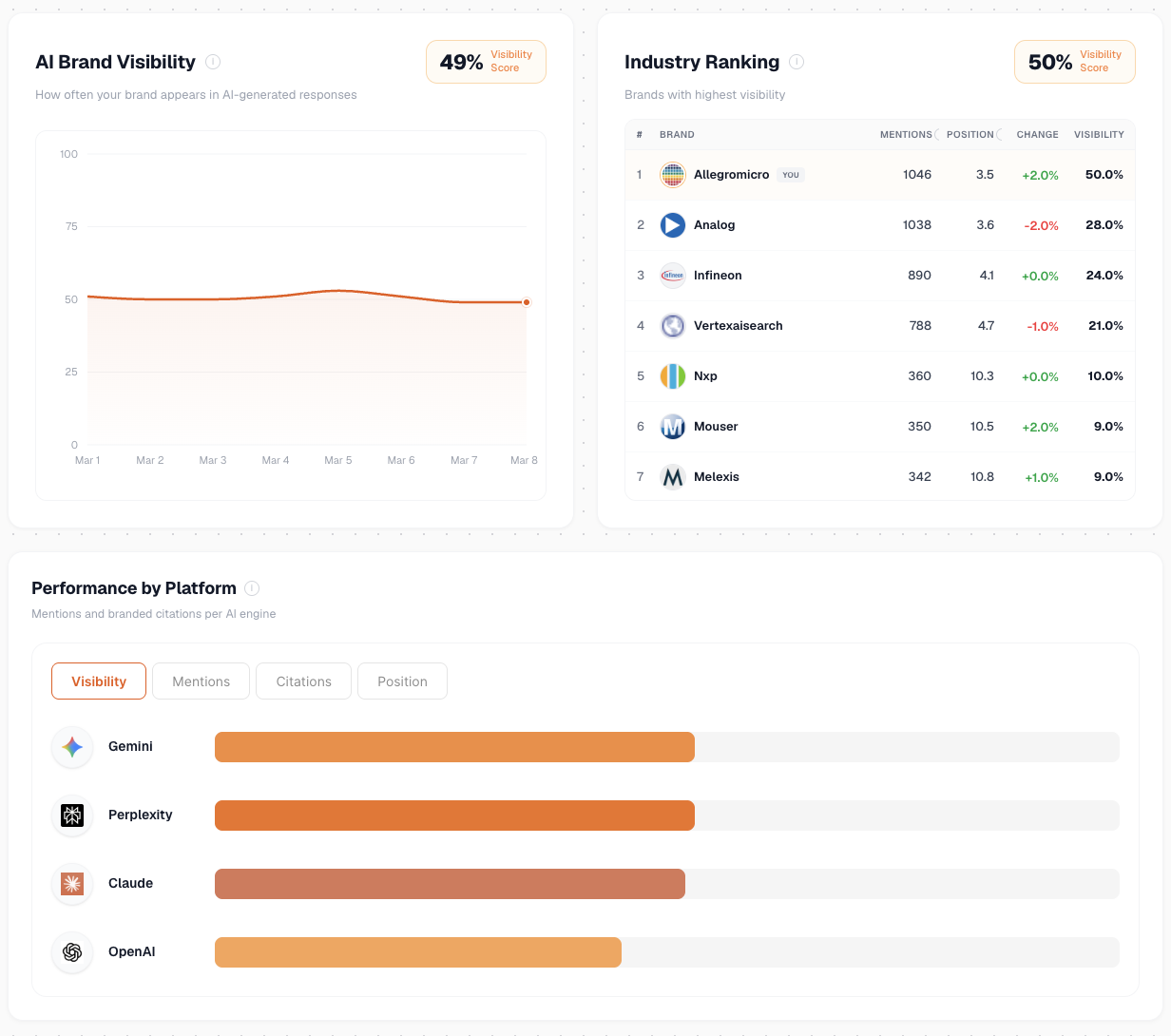

1. Visibility Rate

What it measures: The percentage of relevant prompts where your brand appears in the AI response.

If you test 50 prompts relevant to your business and your brand shows up in 15 of them, your visibility rate is 30%.

This is the single most important metric. It tells you the probability that a potential customer will encounter your brand when asking AI for help.

| Visibility Rate | Assessment |

|---|---|

| 0-10% | Invisible — urgent action needed |

| 10-30% | Low — significant gaps to close |

| 30-60% | Moderate — room for improvement |

| 60-80% | Strong — competitive position |

| 80%+ | Dominant — market leader in AI |

2. Rank Position

What it measures: Where your brand appears in the AI response — first mention, second, fifth, or buried in a list.

Just like Google rankings, position matters. Being the first brand mentioned in a ChatGPT response carries significantly more weight than being the fourth. First-position brands get:

- Higher trust from the reader

- More likely to be the “recommended” choice

- Better retention in the user’s memory

3. Sentiment Score

What it measures: How the AI describes your brand — positive, neutral, or negative.

Being mentioned is not enough. If ChatGPT says “Brand X is known for reliability but has faced criticism for customer support” — that’s a mixed signal that could hurt conversions.

Track sentiment per engine:

- Positive: AI recommends your brand, highlights strengths

- Neutral: AI mentions your brand factually, no strong opinion

- Negative: AI flags issues, complaints, or weaknesses

4. Citation Sources

What it measures: Which websites the AI cites when mentioning (or not mentioning) your brand.

This reveals where AI models are pulling their information from. If your competitors are getting cited because of strong Reddit presence or industry review sites, you know exactly where to focus your efforts.

5. Share of Voice

What it measures: How often your brand is mentioned vs. competitors across the same prompt set.

If you and three competitors are all relevant for “best CRM for startups” — share of voice tells you who the AI favors and by how much.

How Do You Run an AI Visibility Audit?

The Manual Method (Free, But Inconsistent)

You can test this yourself right now:

- List 10-20 prompts your customers would ask an AI assistant

- Run each prompt in ChatGPT, Gemini, and Perplexity

- Record: Does your brand appear? What position? What sentiment?

- Screenshot the responses for your records

- Repeat monthly to track changes

The problem? AI responses vary by session, user context, and model version. What you see today might be completely different tomorrow. Manual audits are a starting point, not a system.

The Automated Method (Repeatable, Scalable)

Tools like Sanbi.ai automate the entire process:

- Enter your domain

- The platform generates and runs real prompts across ChatGPT, Gemini, Perplexity, and Claude

- You get a structured report with visibility scores, rank positions, sentiment analysis, and citation sources

- Track changes over time with daily monitoring

- Compare against competitors on the same prompt set

The key difference is consistency — every audit uses the same prompts, the same methodology, so you can actually compare results week over week.

Quick start: Run a free AI visibility audit — takes 2 minutes, no signup required. You’ll see your visibility score, sentiment, and which engines mention your brand.

Which AI Platforms Should You Prioritize for Visibility?

The four AI platforms that matter most for brand visibility are ChatGPT (powered by Bing), Gemini (powered by Google’s Knowledge Graph), Perplexity (live web crawling), and Claude (training data consensus). Each has different data sources, optimization levers, and biases — here’s how to approach each one.

ChatGPT

- Data source: Bing search index + training data + plugins

- Optimization lever: Rank well on Bing, submit sitemap to Bing Webmaster Tools

- Key insight: ChatGPT’s browsing mode pulls from Bing in real-time. If Bing can’t find you, ChatGPT can’t cite you

- Schema impact: High — JSON-LD helps ChatGPT verify entity facts

Gemini

- Data source: Google’s Knowledge Graph + Search index

- Optimization lever: Strong Google Business Profile, comprehensive schema markup

- Key insight: Gemini is tightly integrated with Google’s entity database. If you exist as a verified entity in the Knowledge Graph, Gemini is far more likely to mention you confidently

- Brand confusion risk: If another company shares your name, Gemini may mix your data. Fix this with clear Organization schema and Wikidata entries

Perplexity

- Data source: Live web crawling + search APIs

- Optimization lever: Fresh, well-structured content that answers queries directly

- Key insight: Perplexity cites sources explicitly with links. Content with clear H2/H3 question headers and direct answer blocks gets cited most frequently

- Citation style: Most transparent — always shows source URLs

Claude

- Data source: Training data (less real-time browsing)

- Optimization lever: Third-party consensus — mentions across Reddit, forums, industry publications

- Key insight: Claude relies heavily on training data, so third-party mentions that were in the training corpus matter more than recent content

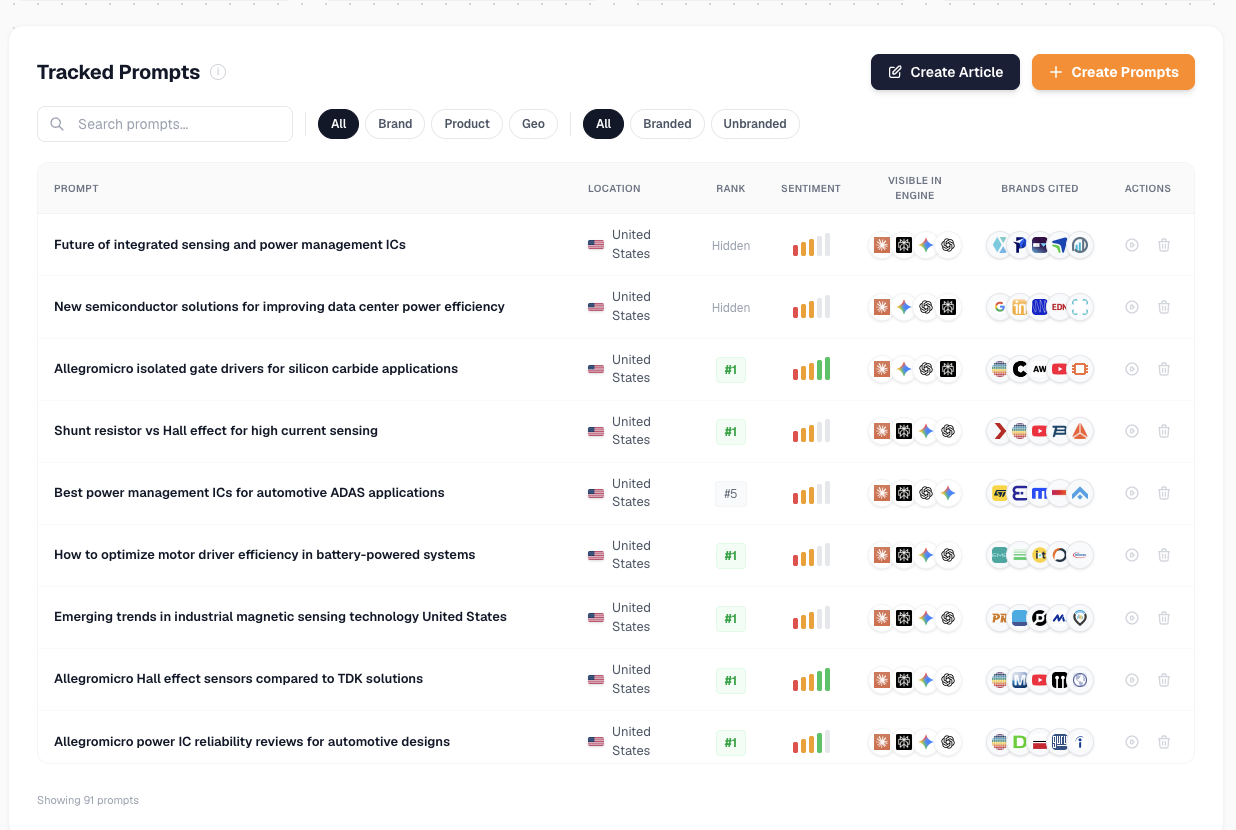

Why Is Prompt Tracking the Most Effective Way to Measure AI Visibility?

Prompt tracking is the most effective AI visibility method because it mirrors exactly how your customers discover brands through AI. Instead of guessing whether your brand “shows up somewhere,” prompt tracking tests the specific questions your audience asks — and records the AI’s exact response every time.

Traditional brand monitoring counts mentions. Prompt tracking answers the question that actually matters: when a customer asks AI for help, does your brand appear in the answer?

How Does Prompt Tracking Work?

- Define your prompt set — Map the 20–50 queries your ideal customers ask AI assistants (e.g., “best CRM for startups,” “top project management tools for remote teams”)

- Run prompts across all engines — Test each prompt against ChatGPT, Gemini, Perplexity, and Claude simultaneously

- Record structured data per prompt — For each query, capture: brand mentioned (yes/no), rank position, sentiment, competing brands, and citation sources

- Track changes over time — The same prompts, tested weekly, reveal exactly which content updates move your visibility

Why Does Prompt Tracking Beat Every Other Measurement Approach?

| Method | Limitation | Prompt Tracking Advantage |

|---|---|---|

| Manual spot-checks | Responses vary by session | Standardized, repeatable prompts eliminate variance |

| Brand monitoring tools | Only track mentions, not context | Captures rank, sentiment, and competing brands per query |

| SEO rank trackers | Don’t measure AI engines | Purpose-built for ChatGPT, Gemini, Perplexity, Claude |

| Social listening | Measures public conversation, not AI recommendations | Measures what the AI actually tells your customers |

How Do You Connect Prompt Tracking to AROI (AI Return on Investment)?

Prompt tracking is also the foundation for calculating your AI Return on Investment (AROI) — the business value generated by your AI visibility efforts.

Here’s the AROI framework:

- Baseline: Run your prompt set and record your starting visibility rate, rank positions, and sentiment scores

- Invest: Implement AI optimization — schema markup, content restructuring, third-party presence building

- Measure: Re-run the same prompt set weekly. Track visibility rate changes, rank improvements, and sentiment shifts

- Attribute: Correlate visibility improvements with downstream metrics — organic traffic from AI referrals, lead volume, conversion rate changes

- Calculate: AROI = (Revenue from AI-driven discovery − AI optimization cost) ÷ AI optimization cost × 100

The brands that track at the prompt level can tie specific content changes to specific visibility improvements — making AI optimization as measurable as paid search.

Get started: Run a free prompt-level AI visibility audit — Sanbi.ai tests your brand across real customer prompts and delivers a structured report with visibility scores, sentiment, and competitor benchmarks.

Why Is Bing Critical for ChatGPT Visibility?

Here’s something most marketers miss: Bing is the backbone of ChatGPT’s real-time knowledge.

When ChatGPT needs to look something up, it searches Bing. That means:

- If your site isn’t indexed by Bing, ChatGPT literally cannot find you in browsing mode

- If your competitors outrank you on Bing, they get cited instead

- Bing Webmaster Tools is now a critical AI visibility tool, not just an SEO afterthought

Action steps:

- Submit your sitemap to Bing Webmaster Tools

- Ensure

robots.txtallows Bingbot - Check your Bing rankings for key queries — they directly impact ChatGPT citations

- Optimize for Bing’s ranking factors (structured data, exact-match content, page speed)

How Do You Build an AI Visibility Measurement System That Compounds?

The most effective AI visibility measurement system follows a weekly-monthly-quarterly rhythm: weekly prompt tracking for rapid feedback, monthly trend analysis for strategic adjustments, and quarterly benchmarking against industry averages and competitors. One-off audits are a starting point — a system is what drives improvement.

Weekly Rhythm

| Day | Action |

|---|---|

| Monday | Review weekly AI visibility report |

| Tuesday | Identify prompts where visibility dropped |

| Wednesday | Update or create content targeting gap prompts |

| Thursday | Check competitor movements |

| Friday | Review citation sources — are new competitors appearing? |

Monthly Review

- Compare visibility rate month-over-month

- Track sentiment trends — improving or declining?

- Analyze which content updates moved the needle

- Identify new prompt opportunities from customer conversations

- Review and update schema markup across key pages

Quarterly Strategy

- Benchmark against industry averages

- Reassess competitor positioning

- Evaluate engine-specific performance (ChatGPT vs Gemini vs Perplexity)

- Plan content calendar around visibility gaps

- Review ROI: are visibility improvements correlating with leads/revenue?

What Are the Most Common AI Visibility Measurement Mistakes?

1. Only checking one engine ChatGPT, Gemini, and Perplexity all have different data sources. Being visible on one doesn’t mean you’re visible on all three. Always measure across all major engines.

2. Testing with branded queries Of course ChatGPT knows your brand name. The real test is non-branded queries — “best [category] for [use case]” — where the AI has to decide who to recommend.

3. Measuring once and forgetting AI models update constantly. Your visibility today can change next week. Continuous monitoring is the only way to stay ahead.

4. Ignoring sentiment Being mentioned negatively is worse than not being mentioned at all. If an AI engine is citing complaints or issues about your brand, fixing sentiment should be the top priority.

5. Not tracking competitors Your visibility is relative. If a competitor improves their AI presence, your share of voice drops even if you did nothing wrong.

How Do You Start Measuring AI Visibility Today?

Start by running a free AI visibility audit at sanbi.ai to establish your baseline across ChatGPT, Gemini, and Claude, then set up weekly prompt tracking to measure progress. The brands winning in AI visibility right now are the ones who started measuring 6 months ago. They built baselines, identified gaps, and systematically improved their presence across every engine.

The window is still open — but it’s narrowing. Every week you don’t measure is a week your competitors can pull ahead in the AI answers that are replacing traditional search.

Your next step: Run a free AI visibility audit and see exactly where your brand stands across ChatGPT, Gemini, and Claude. It takes 2 minutes, no credit card required — and you’ll have a clear baseline to start improving from.

Sanbi.ai monitors your brand’s AI visibility daily across ChatGPT, Gemini, Perplexity, and Claude — tracking visibility scores, sentiment, citations, and competitor movements so you always know where you stand.